Companies that build vessel tracking software operate in a market shaped by persistent route volatility and heightened monitoring requirements. Clarksons Research estimates that global seaborne trade reached 12.9 billion tonnes in 2025, with shipping markets facing higher tonne-miles and greater operational complexity as rerouting, sanctions pressure, and shifting trade flows continued to reshape vessel deployment and voyage planning.

The pressure is visible in supply chain data as well. The World Bank reported that its Global Supply Chain Stress Index, which tracks shipping capacity delays, remained elevated in November 2025, reflecting pressure in the Mediterranean, the North Sea, and Southeast Asia.

The same update notes that shipping capacity had risen 16% since 2024 as new vessels entered service, even while freight rates kept falling. Data scale adds another layer of complexity.

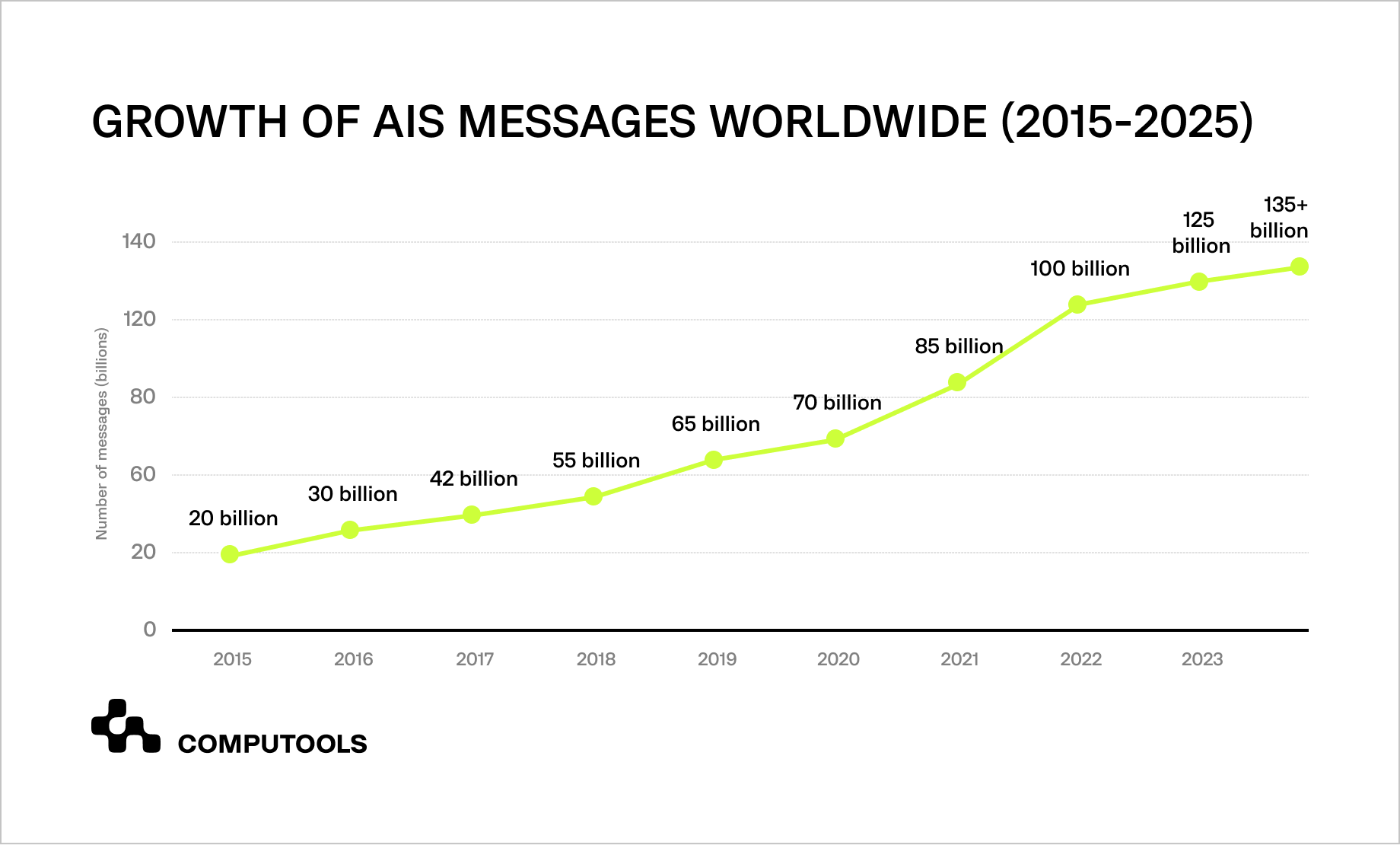

The Global Maritime Traffic Density Service says it has processed over 100 billion AIS messages spanning more than ten years of historical data and generated roughly 550 billion processed density values for global route analysis.

In parallel, NOAA Marine Cadastre continues to refresh AIS datasets, and its hub lists 2025 AIS Broadcast Point Data for October to December as available. That matters for teams building AIS vessel tracking software, because reliable maritime visibility depends on continuous AIS ingestion, historical traffic context, and a data pipeline that supports live monitoring, route analysis, and operational response without breaking track continuity across changing voyage conditions.

How Navis Horizon unified vessel and cargo tracking

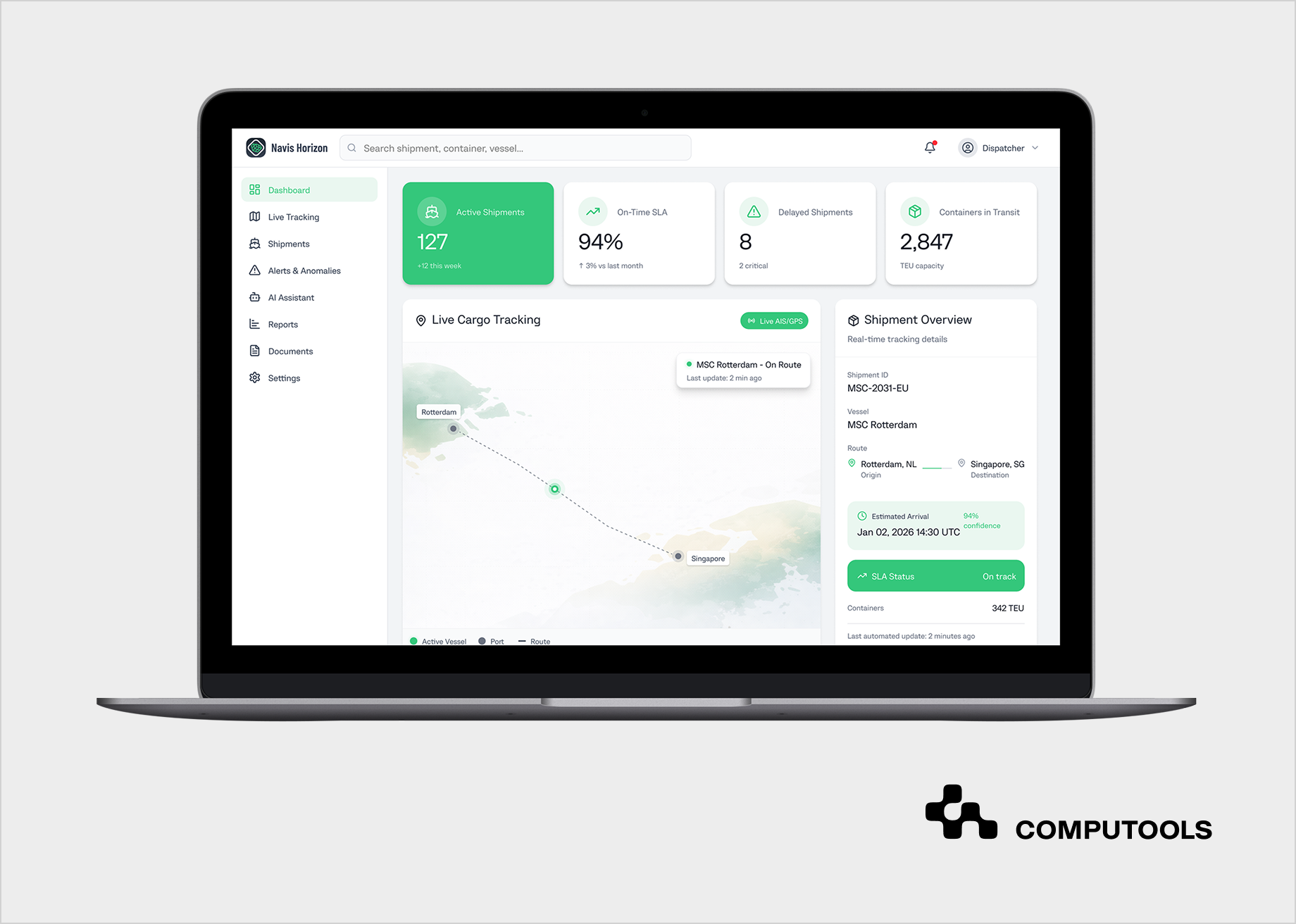

Navis Horizon shows how this model works in a real operational environment. The client, a port logistics operator in Hamburg, needed one of the maritime tracking software solutions that could replace fragmented tracking workflows with a single, reliable view of cargo movement. Before the platform was introduced, dispatchers had to collect updates manually from carrier portals, port messages, and spreadsheets, while customers had limited visibility into shipment progress and frequently requested status checks. That created delays, inconsistent communication, and periodic SLA pressure across daily operations.

Computools addressed these challenges by providing maritime software development services to build a unified cargo visibility platform that consolidated AIS, GPS, and carrier-event data into a single operational interface.

The delivery also relied on software engineering services to define the data aggregation architecture, integrate multiple external systems into a stable real-time stream, and support dashboards, shipment timelines, alerts, and secure role-based access. As a result, the client gained one operational environment for monitoring vessel activity, cargo status, and route changes without switching between disconnected sources.

The platform also included AI development services to strengthen operational decision-making. An AI assistant was added for dispatchers and customers, providing anomaly alerts, predictive delay insights, shipment summaries, and faster access to documentation and timeline context.

According to the case results, Navis Horizon reduced dispatcher workload by 40%, increased customer satisfaction by 23%, accelerated shipment incident resolution by 18%, and delivered full cargo status transparency.

The Navis Horizon case demonstrates Computools’ practical experience in delivering maritime visibility platforms, and the next sections explain, step by step, how to build vessel tracking software using AIS and satellite data.

How to develop vessel tracking software using AIS and satellite data: 9-step guide

Step 1. Define the Tracking Scope and Operational Use Cases

Most projects in this area start inside broader logistics software development services, where vessel visibility is only one part of a larger operational workflow. The first step is to define what the platform must track, which teams will use it, and which daily decisions it must support. The scope usually includes vessel position updates, voyage progress, port approach, route deviations, ETA changes, cargo-related milestones, and alert conditions tied to delays or reporting gaps.

This definition shapes the whole product. Dispatchers need a dense operational view with route context, timeline events, anomaly signals, and access to related shipment details.

Customers need a cleaner interface with current vessel status, expected arrival, and major shipment updates.

Operations managers need aggregated visibility across routes, fleets, and exceptions. When these roles are not separated early, teams end up with overloaded dashboards, unclear event logic, and a system that produces data but doesn’t help anyone act on it.

The tracking model also has to set technical boundaries from the start. The team should define which vessel types and trade corridors fall within scope, how often positions need to be refreshed, what counts as a meaningful event, how ETA changes should be recorded, and which external systems will consume the output. These decisions affect source selection, storage design, alert rules, interface structure, and future scaling. A platform built for coastal monitoring has different update patterns and coverage needs than one designed for long-haul maritime operations with sparse offshore signals.

In Navis Horizon, Computools started with the client’s operational workflows rather than the interface. We mapped how dispatchers collected updates, where customer requests were created, which shipment events mattered most, and where fragmented tracking data slowed response time. That work defined the platform structure early: a dispatcher view for live operational control, a customer-facing visibility layer, and a unified event model built around cargo movement, vessel activity, delays, and status updates.

Step 2. Select AIS, Satellite, and Supporting Data Sources

A reliable tracking platform depends on source coverage before it depends on interface design. The team has to decide which position feeds, event streams, and contextual data sources will form the system’s core, how they will be prioritized, and where coverage gaps are acceptable. In long-range maritime operations, terrestrial AIS alone is rarely enough, so the architecture usually has to account for satellite vessel tracking systems as part of the baseline visibility model.

The main source layer usually combines terrestrial AIS, satellite AIS, carrier events, port status feeds, and internal shipment data. Each source serves a different purpose. Terrestrial AIS gives denser near-shore updates. Satellite AIS extends coverage across open water but may arrive at a lower frequency or with variable latency, depending on the provider and route conditions. Carrier and port feeds help confirm operational milestones that raw position data cannot explain on its own. Internal shipment data links vessel movement to bookings, cargo events, customer-facing updates, and SLA logic.

This step also requires a source quality model. The system has to know which feed is more reliable in a given geography, how to handle delayed or duplicated updates, and how to reconcile differences between reported position, carrier status, and actual voyage progression. Without that logic, the platform may show movement, but it will still fail when teams need accurate timelines, exception handling, or ETA updates tied to real operational events.

A good source strategy also separates current requirements from future expansion. Some products start with AIS and a limited set of partner integrations, then add weather layers, geofencing, congestion signals, port call intelligence, or risk indicators later. That decision should be deliberate. If the source model is too narrow, the system will struggle with blind spots and false confidence. Overloading the system too early will result in the team spending time ingesting data that does not enhance operational decisions.

In Navis Horizon, Computools built the tracking layer around AIS, GPS, and carrier-event data, rather than relying on a single source for all visibility needs. That allowed the client to compare live movement signals with shipment events and route context within a single platform, reducing manual verification and improving the consistency of operational updates. The result was a stronger foundation for alerts, customer communication, and delay detection across fragmented maritime flows.

Step 3. Design the Data Ingestion and Streaming Architecture

Once the source model is defined, the platform requires an ingestion layer to handle continuous updates without losing track consistency. Maritime data doesn’t arrive in perfect order. Position reports have different timestamps, coverage varies geographically, and events from carriers or ports follow different update rhythms. The architecture must absorb, normalize, and deliver clean data to the tracking engine.

This is where AIS data integration for vessel tracking becomes a real engineering task rather than a connector list. The system has to ingest live AIS messages, merge them with satellite-supported updates and supporting event feeds, align timestamps, remove duplicates, and preserve source attribution. It also needs buffering logic for late-arriving records, confidence rules for conflicting updates, and a clear policy for what counts as the latest reliable vessel state. Without that layer, the map may still move, but route history, ETA logic, alerts, and customer-facing timelines will start drifting out of sync.

A strong ingestion architecture also has to separate raw input from operational output. Raw AIS and event data should be stored in a way that supports replay, auditing, and model training, while the processed layer should feed current vessel state, movement history, exceptions, and timeline events into the interface with minimal delay. Message queues, stream processors, geospatial indexing, and event-driven services usually sit at the center of this design because the workload depends on throughput, ordering control, and low-latency delivery across multiple consumers.

The team should also decide early how the platform will behave when input quality drops. Offshore gaps, inconsistent update frequency, duplicated carrier events, or short-term provider latency should not cause the tracking layer to collapse. A production system needs clear fallback logic, stale-data handling, and rules for marking degraded visibility without breaking the rest of the workflow. That is what allows dispatchers and customers to trust the platform even when the incoming signal becomes uneven.

In our case, we focused on building a stable, real-time data layer that could aggregate AIS, GPS, carrier, and port-event inputs into a single, consistent stream. This gave the client a reliable operational foundation for shipment visibility, alerts, and timeline updates without constant manual reconciliation across disconnected sources.

Step 4. Build Vessel Identity Resolution and Track Continuity Logic

Raw position updates, on their own, do not form a usable tracking system. The platform has to determine which messages belong to the same vessel, how those messages connect to an active voyage, and when a new movement pattern should be treated as a separate operational event. That requires identity resolution across MMSI, IMO number, call sign, carrier references, and internal shipment links, along with logic that can preserve continuity when updates arrive late, overlap, or temporarily disappear.

This part is usually one of the hardest areas in vessel tracking software development because offshore signals are rarely clean. A vessel may move through zones with different update densities, switch between coverage conditions, or generate records that look inconsistent when viewed only as isolated points. The system has to reconstruct a continuous track from those fragments, connect it to the voyage context, and decide whether a route change, stop, gap, or jump should trigger a timeline event, an alert, or no action. If that logic is weak, operators get broken vessel histories, unreliable ETAs, and false exceptions that make the whole platform harder to trust.

Track continuity also affects every downstream function. Delay prediction depends on a clean movement history. Alerts depend on accurate state transitions. Customer-facing timelines depend on a stable event sequence rather than a raw stream of coordinates. That is why this step should include rules for stale positions, duplicated events, unexpected jumps, stop detection, route deviation thresholds, and confidence scoring for uncertain movement patterns. A map can still look active without those controls, but the operational layer behind it will remain fragile.

The data model should separate vessel and voyage identity because one vessel serves multiple trips, cargo assignments, and reporting periods. Merging these loosely causes event mixing across voyages, losing clarity. Proper modeling allows teams to trace vessel movements, cargo status, and routes in a single timeline for operations, analytics, and customer communication.

In Navis Horizon, Computools treated tracking data as part of a continuous operational model rather than a loose collection of location updates. We connected vessel movement, shipment context, and status events into a single, coherent timeline, enabling more reliable delay detection, route monitoring, and customer-facing visibility.

Step 5. Create the Real-Time Tracking Engine and Event Layer

Once vessel identity and track continuity are in place, the platform needs a tracking engine that can turn incoming signals into the current vessel state, timeline events, and operational updates. This is the point at which raw movement data becomes real-time vessel monitoring software rather than a map that simply redraws coordinates. The system has to interpret movement patterns, detect state changes, assign events to the correct voyage context, and keep the operational view synchronized across dashboards, alerts, and customer-facing timelines.

The event layer usually sits at the center of this step. It defines what the platform should record as meaningful activity: departure, route deviation, stop, delay risk, arrival approach, geofence entry, reporting gap, or status change tied to cargo flow. Each event needs rules for creation, update, suppression, and display. If that logic is too loose, teams get noise. If it is too strict, the platform misses operational signals that matter for dispatching and customer communication. A good implementation balances event sensitivity with usability, so users see what changed and why without digging through raw data.

The tracking engine must support live synchronization, ensuring position streams, timeline cards, alerts, and vessel status panels update from a unified processed state rather than separate logic fragments. This requires a clean state model, rapid update propagation, and stable handling of short-term source feed inconsistencies. When well-designed, operators can monitor evolving routes, review movement history, and respond to new exceptions instantly.

This layer often defines daily product feel; a strong source and backend won’t matter if the live state engine is weak, leading to unreliable operation. Distrust arises from lagging statuses, inconsistent timelines, and manual verification, underscoring the need to treat the real-time layer as core platform logic, not just a visual feature.

In our project, we built the platform around live status propagation, so dispatchers and customers could see changes as they happened rather than after manual confirmation. Automated event handling, synchronized timelines, and real-time interface updates turned the system into an active monitoring environment instead of a passive tracking screen.

Step 6. Develop ETA Prediction, Alerts, and Exception Monitoring

A tracking platform becomes operationally useful when it can interpret movement patterns and surface the next likely problem before a dispatcher has to find it manually. ETA logic, delay prediction, and exception monitoring sit on top of the tracking engine and convert vessel movement into decisions, not just status updates. Without this layer, the system can show where a vessel is now, but it cannot explain whether the route is slipping, whether the expected arrival still makes sense, or whether customer communication should be updated.

This step becomes more difficult in open-water operations because satellite AIS vessel tracking rarely provides a continuous stream of signals. Offshore updates may be sparse, delayed, or inconsistent across providers, so the platform has to combine historical voyage behavior, current route progression, event context, and coverage quality when calculating ETA and risk. A useful prediction model usually considers route history, average speed profiles, stop patterns, port approach behavior, and the timing of related operational events. That gives teams a working forecast instead of a timestamp pulled from the latest coordinate and dressed up as certainty.

Alerts also need structure. The system should distinguish between a routine variation and a meaningful exception, then assign the right response. Common triggers include route deviation, prolonged stop, reporting gap, slowing patterns before arrival, missed milestone timing, and predicted delay beyond a defined threshold. Every alert should carry context: what changed, how serious it is, which vessel or shipment it affects, and whether the signal came from movement data, event mismatch, or model inference. If the alert layer is vague, users ignore it. If it is too aggressive, they stop trusting the platform altogether.

The platform should also connect predictions and exceptions to visible workflow actions. Dispatchers need a concise view of what requires intervention. Customers need timely updates without having to see raw internal diagnostics. Operations managers need enough aggregation to understand route performance and recurring disruption patterns. That means ETA logic and alerting should feed dashboards, timelines, summaries, and notifications from the same processed state rather than from separate calculation paths.

In Navis Horizon, we added predictive delay detection, anomaly alerts, and AI-generated summaries to help teams act before disruptions escalated. This allowed dispatchers to respond faster to route changes and gave customers clearer visibility into delays, shipment status, and expected next steps.

If you want to explore how AI agents extend maritime visibility platforms beyond alerts and ETA logic, read our guide on AI Agents in Maritime Logistics: Automating Vessel Operations, Cargo Monitoring, and Dispatch Decisions.

Step 7. Build User Interfaces for Dispatchers, Operators, and Customers

A tracking platform becomes usable only when its interface aligns with the decisions each user has to make. That is why most teams end up building custom maritime tracking software rather than relying on a generic dashboard layer. Dispatchers need a dense operational workspace with live vessel status, timeline events, alerts, route context, and quick access to shipment details. Customers need a simpler visibility layer with current location, ETA updates, delay information, and relevant documentation. Operations managers usually need broader monitoring across routes, fleets, and recurring exceptions.

These views should be designed around workflows, not around raw data availability. A dispatcher does not need a screen full of coordinates if the real task is to spot delay risk, confirm a route change, or quickly answer a status request. A customer does not need internal operational noise if the real task is to understand where the cargo is, whether the schedule has changed, and what happens next. The user interface has to convert a shared tracking engine into role-specific decisions, with the right level of detail, the right update cadence, and the right actions available in context.

This step also defines how users move through the product. Search, filters, vessel cards, timeline views, map behavior, alert panels, and document access must work as a single system rather than as separate widgets on a screen. The difference shows up fast in daily use. When navigation is weak, teams waste time jumping between screens and rechecking the same shipment from different angles. When properly designed, the platform supports monitoring, investigation, and communication within a single flow.

The interface should also stay stable under live update pressure. Frequent status changes, new timeline events, and alert propagation can easily make the product noisy or visually exhausting. That is why state management, layout hierarchy, and update behavior matter as much as map rendering. Users need to notice what changed without losing orientation. In a real operational environment, clarity is a reliability feature, not a cosmetic one.

For Navis Horizon, we designed separate interface layers for dispatchers and customers, each tailored to distinct decision-making and levels of detail. That structure reduced cognitive load, improved self-service visibility, and enabled each user group to work with the same tracking system in line with its actual responsibilities.

Step 8. Secure the Platform and Prepare It for Scale

By this point, the platform already has source coverage, track logic, live monitoring, alerts, and role-based interfaces. The next task is to ensure the system can withstand real operational load without exposing sensitive data or degrading under constant updates. In practice, ship-tracking software development reaches production-quality only when security, access control, and scalability are built into the core architecture rather than added as cleanup work before launch.

The security model has to reflect how maritime platforms are actually used. Dispatchers, customers, managers, and external partners do not need the same level of access, so the system should enforce clear role boundaries across maps, timelines, alerts, documents, and shipment history. Encryption for data in transit and at rest, auditability for critical actions, secure API communication, and tenant-aware access rules all matter here because the platform holds operationally sensitive data, including routes, customer information, and event logs. When these controls are weak, the product may still function, but it cannot be trusted in a business environment where visibility data influences service commitments and internal decisions.

Scalability should be handled with the same level of discipline. Vessel traffic, event density, interface activity, and model inference load rarely grow in a perfectly linear way, especially when new routes, carriers, or user groups are added. The architecture needs modular services, stable ingestion throughput, reliable storage for tracking history, and a live delivery layer that can keep maps, alerts, and timelines synchronized without introducing lag. That also means thinking about graceful degradation. If one provider slows down, if update density spikes, or if a route suddenly produces more exceptions than usual, the rest of the platform should remain stable and readable instead of turning into a cascade of stale or conflicting updates.

Production readiness also depends on how the team releases and tests the system. Incremental rollout, repeated testing cycles, controlled feature expansion, and performance checks under live traffic help expose issues before they spread across the whole platform. A vessel-tracking product is not finished until the map works. It is ready when the system can process data continuously, enforce access rules correctly, hold the state under pressure, and keep the operational view coherent across different user roles and changing maritime conditions.

In Navis Horizon, Computools delivered a platform with role-based access control, encryption, GDPR-aligned security, and a modular backend ready for expansion. This gave the client a system that could support additional routes, carriers, and operational features without losing stability or control over sensitive shipment data.

Step 9. Test with Live Routes, Validate Performance, and Expand Coverage

The final step is to move the platform from a controlled technical build into a working operational system. This is where ship tracking system development is validated against live routes, unstable signal conditions, real user behavior, and growing data volumes, without breaking the tracking model. A vessel tracking platform should be tested against real route scenarios, not just clean development datasets, because live operations quickly expose timing gaps, noisy events, interface overload, and weak alert thresholds.

Validation should extend beyond uptime, including comparing predicted and actual arrival patterns, evaluating alert accuracy, reviewing stale data behavior, testing route continuity with sparse updates, and ensuring customer timelines align with dispatchers. This stage reveals if the platform helps teams work faster or just rehashes existing problems, and allows clients to refine thresholds, adjust event logic, and identify workflow automation opportunities.

Rollout works best when it expands in controlled layers. A team may start with selected routes, a limited vessel group, or a specific user segment, then extend the platform once the tracking logic, ETA behavior, and notification flows hold up under real load. That approach reduces operational risk and makes it easier to identify where source quality, interface design, or model performance still need correction. Once the core flow is stable, the product can support additional carriers, broader route coverage, more users, and new monitoring features without forcing a structural rebuild.

This step also closes the feedback loop between engineering and operations. Vessel tracking software improves when route teams, dispatchers, support staff, and customer-facing users can review how the platform behaves in practice and feed that back into the product. Testing, rollout, and expansion should be treated as a single process. Otherwise, the system may launch successfully and still fail to become part of daily decision-making.

In Navis Horizon, Computools released the platform in iterative phases, with regular testing cycles and early client feedback shaping each stage of delivery. That rollout model helped validate the system under real operating conditions and turned the platform into a stable, long-term operational tool rather than a one-time implementation.

For companies modernizing broader logistics operations beyond maritime tracking, our guide to Warehouse Robotics Software: Control Systems for Autonomous Picking and Sorting covers another layer of operational automation.

Want to engineer a high-accuracy vessel tracking solution with global coverage? Reach out to our experts to plan data ingestion, processing, and deployment.

4 trends shaping how companies build vessel tracking software

1. Route volatility is changing the baseline for tracking architecture

Vessel tracking platforms are now designed for longer voyages, rerouting pressure, and less predictable traffic patterns. UNCTAD reports that average voyage haul increased from 4,831 miles in 2018 to 5,245 miles in 2024, while trade flows continued to shift under geopolitical and security pressure. For product teams, this raises the bar for route monitoring, ETA control, and exception handling. Systems have to maintain continuity across longer distances, uneven update density, and changing corridor conditions.

2. An AIS-based ship tracking platform is no longer expected to stop at position display

The market has moved beyond map-only visibility. EMSA’s Automated Behavior Monitoring is built to detect anomalous or specific vessel behavior from position reports, and its 2026-2028 work program continues expanding monitoring algorithms, backend interfaces, and data-combination capabilities. That shifts expectations for an AIS-based ship tracking platform. Operators increasingly need systems that can identify reporting gaps, suspicious movement patterns, route deviations, and delay signals, rather than just showing coordinates without interpretation.

3. Historical AIS data is becoming more operationally valuable

Tracking quality now depends on historical context as much as on live feeds. NOAA’s Marine Cadastre lists 2025 AIS Broadcast Point Data for late 2023, reflecting ongoing AIS dataset updates. Consistent historical coverage is vital for route baselines, anomaly detection, traffic analysis, and model training. Teams creating maritime products require storage and replay capabilities for investigation, forecasting, and route pattern analysis, beyond live monitoring.

4. Vessel monitoring system software is starting to rely on broader satellite intelligence

AIS remains a core part of maritime tracking, but the supporting technology stack is expanding. ESA notes that Sentinel-1C and Sentinel-1D improve maritime traffic monitoring through integrated AIS, while the EO-VTI project combines satellite data, AIS analytics, and explainable risk scoring to help identify deceptive or high-risk maritime activity. This pushes vessel monitoring system software toward multi-source monitoring, risk interpretation, and stronger support for compliance, insurance, and operational control.

Why companies choose Computools

Companies choose Computools when they need a team that can turn fragmented maritime data, live tracking inputs, and operational workflows into a stable product with measurable results.

Our maritime software development services cover discovery, architecture, engineering, testing, and rollout, with a strong focus on real-time visibility and business-specific logic. Across our logistics direction, Computools highlights 45% faster delivery operations, 35% cost savings, 250+ experts, and 40+ projects delivered.

That delivery model is reflected in our case work. In Navis Horizon, we built one of our maritime fleet tracking software solutions for a port logistics operator, consolidating AIS/GPS and carrier-event data into one platform. The result was a 40% reduction in dispatcher workload, a 23% increase in customer satisfaction, and a 18% faster resolution of shipment incidents.

Our IoT development services add value in projects where live operational signals matter beyond interface logic.

In the Railway System case, for example, Computools developed a real-time cargo fleet positioning system with instant alerts for deviations in key safety parameters, while HubMarine used sensors to support berth allocation and smarter marina operations. Together, these cases show that we can build maritime platforms that integrate software, live data, and operational control in a single environment.

Want to discuss your vessel tracking platform? Contact our team at info@computools.com to talk through your requirements, data sources, and delivery scope.

Looking for a broader view of the market? See our overview of the Top 20 Maritime Software Development Companies Globally.

To sum up

To build vessel tracking software that works in real maritime operations, companies need more than live coordinates and basic map logic. Effective ship tracking software development requires scalable data processing, reliable track continuity, actionable alerts, a secure architecture, and interfaces designed for operational decision-making.

When these parts work together, the platform improves visibility, reduces manual effort, and gives teams a system they can rely on every day.

Computools

Software Solutions

Computools is an IT consulting and software development company that delivers innovative solutions to help businesses unlock tomorrow.

“Computools was selected through an RFP process. They were shortlisted and selected from between 5 other suppliers. Computools has worked thoroughly and timely to solve all security issues and launch as agreed. Their expertise is impressive.”